Found 93 talks archived in Cosmology

Abstract

Abstract

The standard model of cosmology has been quite successful accounting for a broad range of data - at least until the past few years. But as the quality of cosmological measurements has continued to improve, tension has grown between observations and the predictions of LCDM. In this talk, we will address several "problem" areas, and then focus on the most recent: the emergence of a rather strong non-inflationary signature in the angular correlation function of the microwave background. Inflation is critical to the internal self-consistency of the standard model. Yet after 4 decades, we still lack convincing evidence that it ever happened.

Abstract

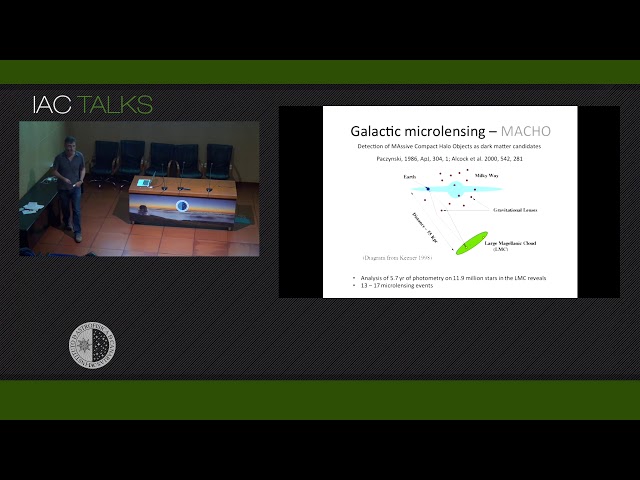

The lack of detection of elementary particles that could explain dark matter and the recent detection of gravitational waves by the LIGO experiment have renewed interest in the hypothesis that dark matter can be made of Primordial Massive Black Holes (PMBH). We review very briefly the outcomes and limits of the classical MACHO experiment, used to probe the dark matter in the halo of the Milky Way from galactic microlensing, and introduce the more universal scenario of quasar microlensing. Quasar microlensing is sensitive to any population of compact objects in the lens galaxy, to their abundance and to their mass. Using microlensing data from 24 lensed quasars, we conclude that the fraction of mass in any type of MACHO is negligible outside of the 0.05 MSun<M<0.45 MSun mass range. This excludes any significant population of intermediate mass PBH. We estimate a fraction of halo mass in microlenses of 20%. The range of masses and abundances are in agreement with those expected for the stellar component. Using the mean mass estimate and some limits derived from multiwavelength microlensing observations we speculate about the stellar Present Day Mass Function.

Abstract

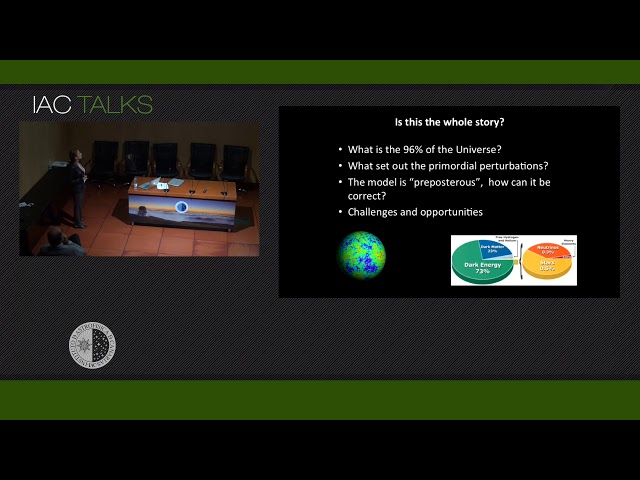

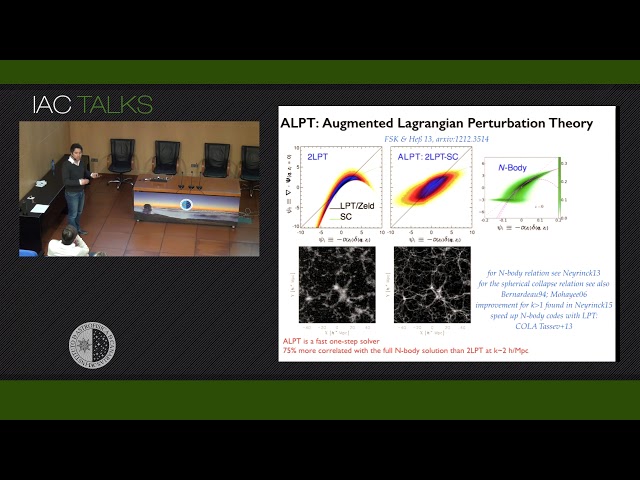

The standard cosmological model has been established and its parameters are now measured with unprecedented precision. However, there is a big difference between modelling and understanding. The next decade will see the era of large surveys; a large coordinated effort of the scientific community in the field is on-going to map the cosmos producing an exponentially growing amount of data. This will shrink the statistical errors. But precision is not enough: accuracy is also crucial. Systematic effects may be in the data but may also be in the model used in their interpretation. I will present a small selection of examples where I explore approaches to help the transition from precision to accurate cosmology. This selection is not meant to be exhaustive or representative, it just cover some of the problems I have been working on recently.

Abstract

Abstract

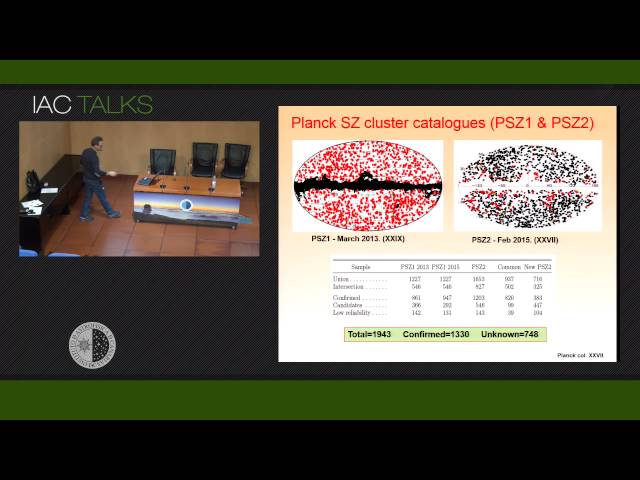

Planck satellite provides for the first time the possibility to detect galaxy clusters using their Sunyaev-Zeldovich (SZ) effect signature covering the full sky (Planck Col XXIX, 2013). Planck SZ catalogs I and II include more than 1900 sources, of which 700 remain unknown. The study of the purity of these samples and the characterization of SZ sources is essential to perform cosmology with cluster counts. With this aim in mind, the IAC-Planck group is performing the optical validation and characterization of these samples through two long-term observing programs at Canary Island observatories, the ITP 13B15A and the large-term 15B-17A. In this talk we will present intermediate results of this validation program. Using photometric and spectroscopic information (mainly multi-object techniques) we estimate redshifts and dynamical masses in order to minimize the errors in the Msz-Mdyn scaling relation and the SZ clusters mass function which allow a better determination of cosmological parameters (mainly Omega_m, sigma_8 and neutrino mass) from Planck SZ survey.

Abstract

The cosmological large-scale structure encodes a wealth of information about the origin and evolution of our Universe. Galaxy redshift surveys provide a 3-dimensional picture of the luminous sources in the Universe. These are however biased tracers of the underlying dark matter field. I will discuss the different components which are relevant to model galaxy bias, ranging from deterministic nonlinear, over non-local, to stochastic components. These effective bias ingredients permit us to save computational time and memory requirements, to efficiently produce mock galaxy catalogues. These are useful to study systematics of survey, test analysis tools, and compute covariance matrices to perform a robust analysis of the data. Moreover, this description permits us to implement them in inference analysis methods to recover the dark matter field and its peculiar velocity field. I will show some examples based on the largest sample of luminous red galaxies to date based on the final BOSS SDSS-III data release.

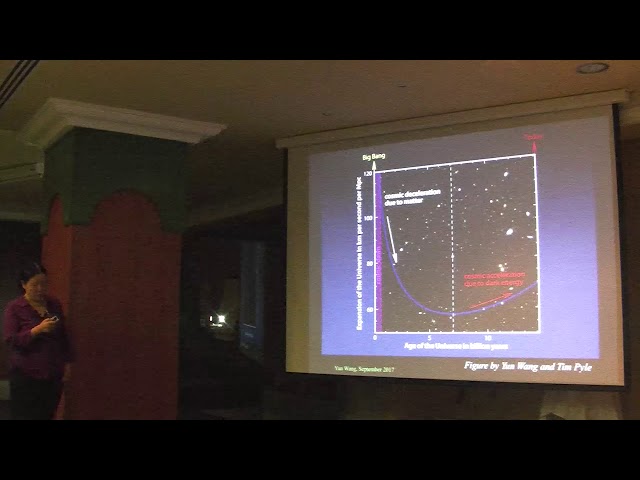

Abstract

The Cosmic Microwave Background (CMB), the fossil light of the BigBang, is the oldest light that one can ever hope to observe in ourUniverse. The CMB provides us with a direct image of the Universe whenit was still an "infant" - 380,000 years old - and has enabled us to obtaina wealth of cosmological information, such as the composition, age,geometry, and history of the Universe. Yet, can we go further and learnabout the primordial universe, when it was much younger than 380,000years old, perhaps as young as a tiny fraction of a second? If so, thisgives us a hope to test competing theories about the origin of theUniverse at ultra high energies. In this talk I present the results from theWilkinson Microwave Anisotropy Probe (WMAP) satellite that Icontributed, and then discuss the recent results from the Plancksatellite (in which I am not involved). Finally, I discuss future prospectson ourquest to probe the physical condition of the very early Universe.

Abstract

I will present results from The X-Shooter Lens Survey (XLENS). With XLENS we are unambiguously separate the stellar from the dark-matter content in the internal region of lens early-type galaxies (ETGs) to understand their interplay and to probe directly their formation and dynamical evolution. We combine precise strong gravitational lensing and dynamical constraints on the mass distribution with high signal-to-noise spectroscopy in the entire rest-frame visible to the NIR.In this talk I will present results obtained on a sample of very massive lens ETGs from the SLACS Survey, with velocity dispersions greater than 250 km/s and redshift>0.1.

First I will show how to constrain the low mass end of the Initial Mass Function (IMF)directly from galaxy optical spectra using a new set of non-degenerate optical spectroscopic indices which are strong in cool giants and dwarfs and almost absent in main sequence stars (Spiniello et al., 2014a). I will present unambiguous evidence that the low-mass end of the IMF is not universal. Then, I will demonstrate that the combination of this SSP modelling with a fully self-consistent joint lensing+dynamics analysis (Barnabè et al. 2012) allows us to disentangle IMF slope variations from internal dark-matter variations and, for the first time ever, to contemporary put constrains on the IMF cutoff mass (Barnabè et al., 2013, Spiniello et al., in prep).

Abstract

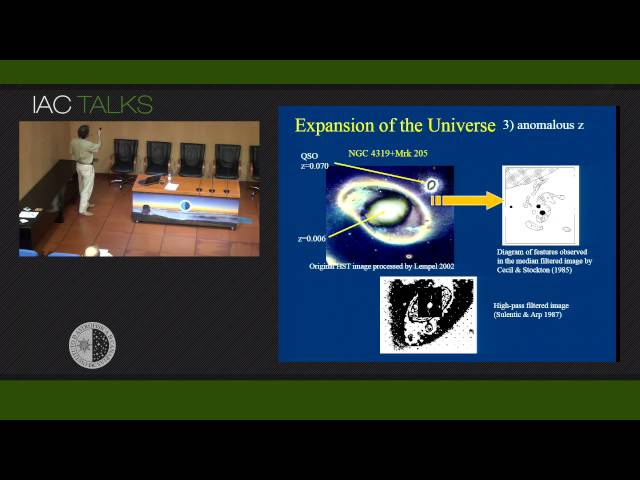

Almost all cosmologists accept nowadays that the redshift of the galaxies is due to the expansion of the Universe (cosmological redshift), plus some Doppler effect of peculiar motions, but can we be sure of this fact by means of some other independent cosmological test? Here I will review some recent tests: CMBR temperature versus redshift, time dilation, the Hubble diagram, the Tolman or surface brightness test, the angular size test, the UV surface brightness limit and the Alcock-Paczynski test. Some tests favour expansion and others favour a static Universe. Almost all the cosmological tests are susceptible to the evolution of galaxies and/or other effects. Tolman or angular size tests need to assume very strong evolution of galaxy sizes to fit the data with the standard cosmology, whereas the Alcock-Paczynski test, an evaluation of the ratio of observed angular size to radial/redshift size, is independent of it.

Upcoming talks

- Runaway O and Be stars found using Gaia DR3, new stellar bow shocks and search for binariesMar Carretero CastrilloTuesday April 30, 2024 - 12:30 GMT+1 (Aula)

- Detecting GWs in the muHz: natural and artificial satellites as GW detectorsProf. Diego BlasThursday May 2, 2024 - 10:30 GMT+1 (Aula)