Found 61 talks archived in Computing

Abstract

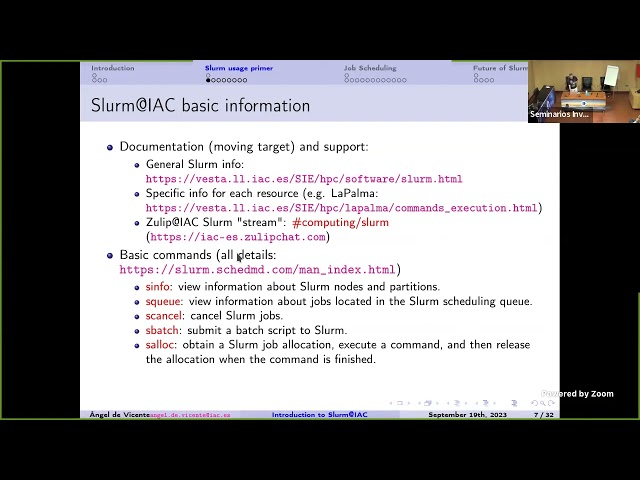

Slurm Workload Manager (formerly known as Simple Linux Utility for Resource Management (SLURM), or simply Slurm, is a free and open-source job scheduler for Linux and Unix-like kernels, used by many of the world's supercomputers and computer clusters. While Slurm has been used at the IAC in the LaPalma supercomputer and deimos/diva for a long time, we are now starting to also use it in public "burros" (and project burros that request it), in order to ensure a more efficient and balanced use of these powerful (but shared amongst many users) machines. While using Slurm is quite easy, we are aware that it involves some changes for users. To help you understand how Slurm works and how to best use it, in this talk I will focus on: why we need queues; an introduction to Slurm usage; Slurm configuration in LaPalma/diva/burros; use cases (including interactive jobs); ensuring a fair usage amongst users and an efficient use of the machines; etc. [If you are already a Slurm user and have specific questions/comments, you can post them in the #computing/slurm stream in IAC-Zulip (https://iac-es.zulipchat.com) and I'll try to cover them during the talk]

Abstract

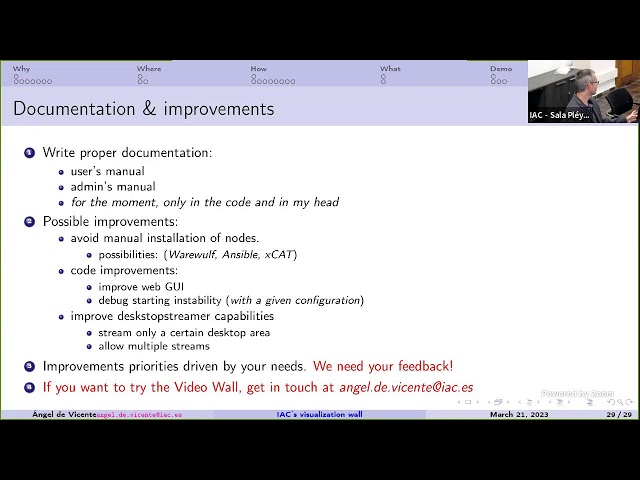

In a time when we deal with extremely large images (be it from computer

simulations or from extremely powerful telescopes), visualizing them can

become a challenge. If we use a regular monitor, we have two options:

1) fit the image to our monitor resolution, which involves interpolation

and thus losing information and the ability to see small image details.

2) zooming in on small parts of the image to view them at full

resolution, which involves losing context and the global view of the

full image.

To alleviate these problems, display walls of hundreds of Megapixels can

be built, which allow us to visualize in full resolution small details

of the images while retaining in view a larger image context. For

example, one of the world's highest resolution tiled-displays is

Stallion (https://www.tacc.utexas.edu/vislab/stallion, at the TACC in

Texas, USA), with an impressive resolution of 597 Megapixels (an earlier

version of the system can be seen being used at

https://tinyurl.com/mt7atad9).

At the IAC we have built a more modest display wall (133 Megapixels),

which you probably have already seen in action in one of our recent

press releases (https://tinyurl.com/4bwtxvec). In this talk I will

introduce this new visualization facility (which any IAC researcher can

use) and discuss on some design issues, possible current and future

uses, limitations, etc.

Abstract

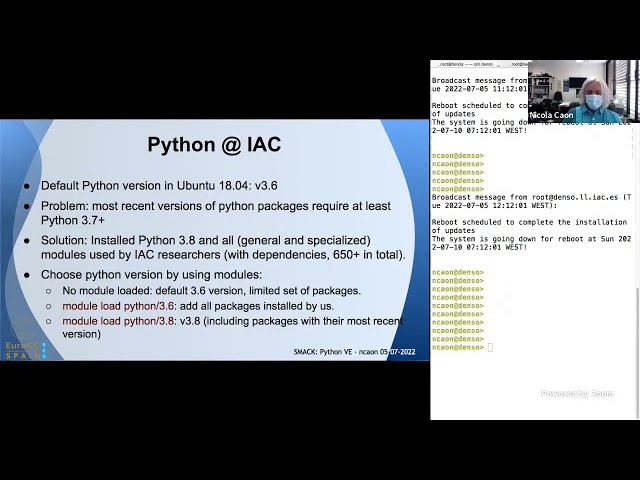

The first part of this talk will present an overview of the tool "module" and its main commands and flags. "module" provides the dynamic modification of the user’s environment for supporting multiple versions of an application or a library without any conflict. In the second part, we’ll first explain what Python virtual environments are, and describe three actual cases in which they are used. We’ll then illustrate a practical example to install a Python virtual environment, and duplicate it on a different platform.

Abstract

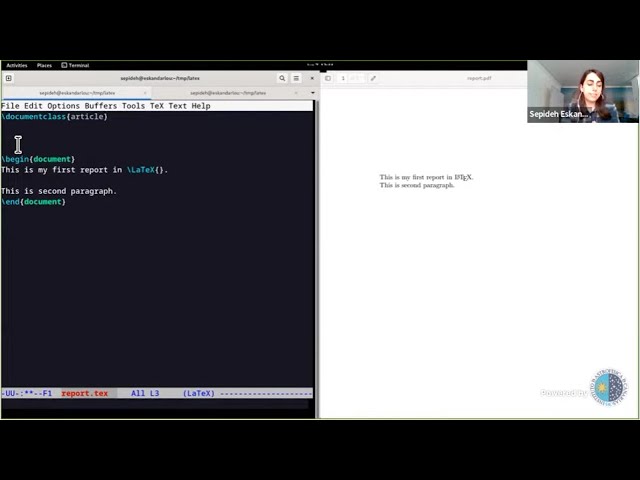

In the 16th SMACK, the very basics of LaTeX were introduced; showing how to make a basic document from scratch, set the printable size, inserting images and tables and etc. In this session, we will go into more advanced features that are also commonly helpful when preparing a professional document (while letting you focus on your exciting scientific discovery, and not have to worry about the style of the output). These features include automatically referencing different parts of your document using labels (this allows you to easily shuffle figures, sections or tables), making all references to various parts of your text click-able (greatly simplifying things for your readers), using Macros (to avoid repetition or importing your analysis results automatically), adding bibliography, keeping your LaTeX source, and your top directory clean, and finally using Make to easily automate the production of your document.

Lecture notes: https://gitlab.com/makhlaghi/smack-talks-iac/-/blob/master/smack-17-latex-b.md

CEFCA

Abstract

LaTeX is a professional typesetting system to create a ready-to-print or publish (usually PDF!) document (usually papers!). LaTeX is the format used by arXiv, and many journals, when you want to submit your scientific papers. With LaTeX, you "program" the final document: text, figures, tables, bibliography and etc, through a plain-text (source) file. When you run LaTeX on your source, it will parse your LaTeX source and find the best way to blend everything in a nice and professionally design PDF document. Therefore LaTeX allows you to focus on the actual content of your text, tables, plots, and not have to worry about the final design (the "style" file provided by the journal will do all of this for you automatically). This is in contrast to "What-You-See-Is-What-You-Get" (or WYSIWYG) editors, like Microsoft Word or LibreOffice Writer, which force you to worry about style in the middle of content writing (which is very frustrating). Since the source of a LaTeX document is plain-text, you can use version-control systems like Git to keep track of your changes and updates (Git was introduced in SMACK 5, SMACK 6 and SMACK 8). This feature of LaTeX allows easy collaboration with your co-authors, and is the basis of systems like Overleaf. Previously (in SMACK 11), some advanced tips and tricks were given on the usage of LaTeX. This SMACK session is aimed to complement that with a hands-on introduction for those who are just starting to use LaTeX. It is followed by SMACK 17 on other basic features that are necessary to get comfortable with LaTeX.

Lecture notes: https://gitlab.com/makhlaghi/smack-talks-iac/-/blob/master/smack-16-latex.md

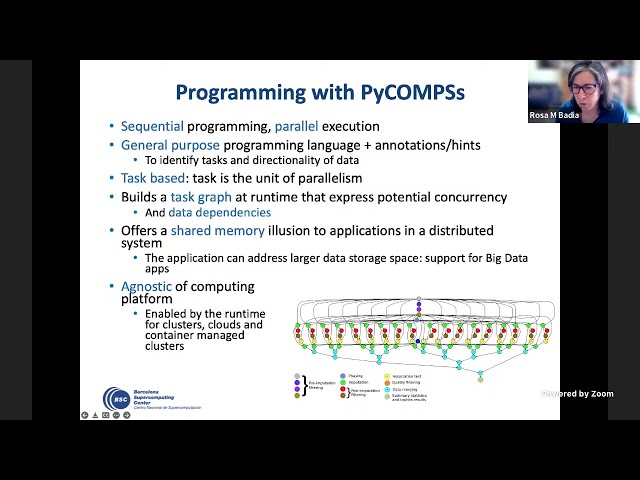

Abstract

PyCOMPSs is a task-based programming in Python that enables simple sequential codes to be executed in parallel in distributed computing platforms. It is based on the addition of python decorators in the functions of the code to indicate those that can be executed in parallel between them. The runtime takes care of the different parallelization and resource management actions, as well as of the ditribution of the data in the different nodes of the computing infrastructure. It is installed in multiple supercomputers of the RES, like MareNostrum 4 and now LaPalma. The talk will present an overview of PyCOMPSs, two demos with simple examples and a hands-on in LaPalma on how we can parallelize EMCEE workloads.

Slides and Examples: https://gitlab.com/makhlaghi/smack-talks-iac

Abstract

Eclipse Foundation AISBL[1] is the biggest Open Source organization within the EU. It the home for over 400 projects[2] covering a wide range of knowledge areas. It also hosts 18 groups of companies (Working Groups) forming an ecosystem of over 300 organizations[3] that supports the development of many of these projects, as well as the creation of a variety of specifications within different industries.

Eclipse Foundation has a long history of fruitful relations with research and development projects[4] and organizations worldwide, and specifically in Europe.

During this session, an introduction of Eclipse Foundation will be delivered, insisting on our main value proposition, followed by a short description of some of the most relevant projects, especially in the area of Research, in which we are involved.

We will finalise the presentation describing how The Eclipse Foundation interacts with these R&D organizations and institutions as a needed requirement towards exploring together potential collaboration vectors in the near future.

Abstract

Mathematics of atmospheric fronts: S.Q.G.(Surface Quasigeostrophic Equation) is a relevant model to understand the evolution of atmospheric fronts. It represents also a mathematical challenge, because of its non-linear and non-local character, which illustrates the rôle of mathematics in the development of science.

This colloquium will be held in person in the Aula

CEFCA

Abstract

Did you ever want to re-run your project from the beginning, but run into trouble because you forgot one step? Do you want to run just one part of your project and ignore the rest? Do you want to run it in parallel with many different inputs using all the cores of your computer? Do you want to design a modular project, with re-usable parts, avoiding long files hard to debug?

Make is a well-tested solution to all these problems. It is independent of the programming language you use. Instead of having a long code hard to debug, you can connect its components making a chain. Make will allow you to automate your project and retain control of how its parts are integrated. This SMACK seminar will give an overview of this powerful tool.

Gitlab link: https://gitlab.com/makhlaghi/smack-talks-iac/-/tree/master/

CEFCA

Abstract

Containers are portable environments that package up code and all its dependencies so that an application can run quickly and reliably from one computing environment to another. Most people are probably familiar with full virtualization environments (such as VirtualBox), so in this talk we will explain the main differences between full virtualization and containers (sometimes called light-weight virtualization), and when to use each.

At the same time, not all container technologies have the same goals and/or approaches. Docker is the most mature container offering, but it is geared mainly towards micro-services. Singularity is a newer contender, with an emphasis on mobility of compute for scientific computing. We will introduce both softwares, showing how to create and use containers with each of them, while discussing real-life examples of their use.

The lecture notes can be found here:

https://gitlab.com/makhlaghi/smack-talks-iac/-/blob/master/smack-13-docker.md

Upcoming talks

- The effect of magnetic fields on galaxy evolution Dr. Enrique López-RodríguezThursday April 18, 2024 - 10:30 GMT+1 (Aula)

- EMO-1: Construyendo un observatorio caseroEnol Matilla BlancoFriday April 19, 2024 - 10:30 GMT+1 (Aula)